diff --git a/examples/vision/detection/paddledetection/cpp/README.md b/examples/vision/detection/paddledetection/cpp/README.md

index d10be1525..0e944a465 100755

--- a/examples/vision/detection/paddledetection/cpp/README.md

+++ b/examples/vision/detection/paddledetection/cpp/README.md

@@ -41,6 +41,9 @@ tar xvf ppyoloe_crn_l_300e_coco.tgz

以上命令只适用于Linux或MacOS, Windows下SDK的使用方式请参考:

- [如何在Windows中使用FastDeploy C++ SDK](../../../../../docs/cn/faq/use_sdk_on_windows.md)

+如果用户使用华为昇腾NPU部署, 请参考以下方式在部署前初始化部署环境:

+- [如何使用华为昇腾NPU部署](../../../../../docs/cn/faq/use_sdk_on_ascend.md)

+

## PaddleDetection C++接口

### 模型类

diff --git a/examples/vision/detection/yolov5/cpp/README.md b/examples/vision/detection/yolov5/cpp/README.md

index 61abe3275..c70d0d118 100755

--- a/examples/vision/detection/yolov5/cpp/README.md

+++ b/examples/vision/detection/yolov5/cpp/README.md

@@ -55,6 +55,9 @@ wget https://gitee.com/paddlepaddle/PaddleDetection/raw/release/2.4/demo/0000000

以上命令只适用于Linux或MacOS, Windows下SDK的使用方式请参考:

- [如何在Windows中使用FastDeploy C++ SDK](../../../../../docs/cn/faq/use_sdk_on_windows.md)

+如果用户使用华为昇腾NPU部署, 请参考以下方式在部署前初始化部署环境:

+- [如何使用华为昇腾NPU部署](../../../../../docs/cn/faq/use_sdk_on_ascend.md)

+

## YOLOv5 C++接口

### YOLOv5类

diff --git a/examples/vision/detection/yolov6/cpp/README.md b/examples/vision/detection/yolov6/cpp/README.md

index 765dde84c..eceb5bc46 100755

--- a/examples/vision/detection/yolov6/cpp/README.md

+++ b/examples/vision/detection/yolov6/cpp/README.md

@@ -33,6 +33,10 @@ wget https://gitee.com/paddlepaddle/PaddleDetection/raw/release/2.4/demo/0000000

./infer_paddle_demo yolov6s_infer 000000014439.jpg 3

```

+如果用户使用华为昇腾NPU部署, 请参考以下方式在部署前初始化部署环境:

+- [如何使用华为昇腾NPU部署](../../../../../docs/cn/faq/use_sdk_on_ascend.md)

+

+

如果想要验证ONNX模型的推理,可以参考如下命令:

```bash

#下载官方转换好的YOLOv6 ONNX模型文件和测试图片

diff --git a/examples/vision/detection/yolov7/cpp/README.md b/examples/vision/detection/yolov7/cpp/README.md

index 5cab3cc95..5308f7ddb 100755

--- a/examples/vision/detection/yolov7/cpp/README.md

+++ b/examples/vision/detection/yolov7/cpp/README.md

@@ -31,6 +31,10 @@ wget https://gitee.com/paddlepaddle/PaddleDetection/raw/release/2.4/demo/0000000

# 华为昇腾推理

./infer_paddle_model_demo yolov7_infer 000000014439.jpg 3

```

+

+如果用户使用华为昇腾NPU部署, 请参考以下方式在部署前初始化部署环境:

+- [如何使用华为昇腾NPU部署](../../../../../docs/cn/faq/use_sdk_on_ascend.md)

+

如果想要验证ONNX模型的推理,可以参考如下命令:

```bash

#下载官方转换好的yolov7 ONNX模型文件和测试图片

diff --git a/examples/vision/ocr/PP-OCRv2/cpp/CMakeLists.txt b/examples/vision/ocr/PP-OCRv2/cpp/CMakeLists.txt

index 93540a7e8..8b2f7aa61 100644

--- a/examples/vision/ocr/PP-OCRv2/cpp/CMakeLists.txt

+++ b/examples/vision/ocr/PP-OCRv2/cpp/CMakeLists.txt

@@ -12,3 +12,7 @@ include_directories(${FASTDEPLOY_INCS})

add_executable(infer_demo ${PROJECT_SOURCE_DIR}/infer.cc)

# 添加FastDeploy库依赖

target_link_libraries(infer_demo ${FASTDEPLOY_LIBS})

+

+add_executable(infer_static_shape_demo ${PROJECT_SOURCE_DIR}/infer_static_shape.cc)

+# 添加FastDeploy库依赖

+target_link_libraries(infer_static_shape_demo ${FASTDEPLOY_LIBS})

diff --git a/examples/vision/ocr/PP-OCRv2/cpp/README.md b/examples/vision/ocr/PP-OCRv2/cpp/README.md

index e30d886d1..9052dd80e 100755

--- a/examples/vision/ocr/PP-OCRv2/cpp/README.md

+++ b/examples/vision/ocr/PP-OCRv2/cpp/README.md

@@ -43,13 +43,16 @@ wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_

./infer_demo ./ch_PP-OCRv2_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv2_rec_infer ./ppocr_keys_v1.txt ./12.jpg 3

# 昆仑芯XPU推理

./infer_demo ./ch_PP-OCRv2_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv2_rec_infer ./ppocr_keys_v1.txt ./12.jpg 4

-# 华为昇腾推理

-./infer_demo ./ch_PP-OCRv2_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv2_rec_infer ./ppocr_keys_v1.txt ./12.jpg 5

+# 华为昇腾推理, 需要使用静态shape的demo, 若用户需要连续地预测图片, 输入图片尺寸需要准备为统一尺寸

+./infer_static_shape_demo ./ch_PP-OCRv2_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv2_rec_infer ./ppocr_keys_v1.txt ./12.jpg 1

```

以上命令只适用于Linux或MacOS, Windows下SDK的使用方式请参考:

- [如何在Windows中使用FastDeploy C++ SDK](../../../../../docs/cn/faq/use_sdk_on_windows.md)

+如果用户使用华为昇腾NPU部署, 请参考以下方式在部署前初始化部署环境:

+- [如何使用华为昇腾NPU部署](../../../../../docs/cn/faq/use_sdk_on_ascend.md)

+

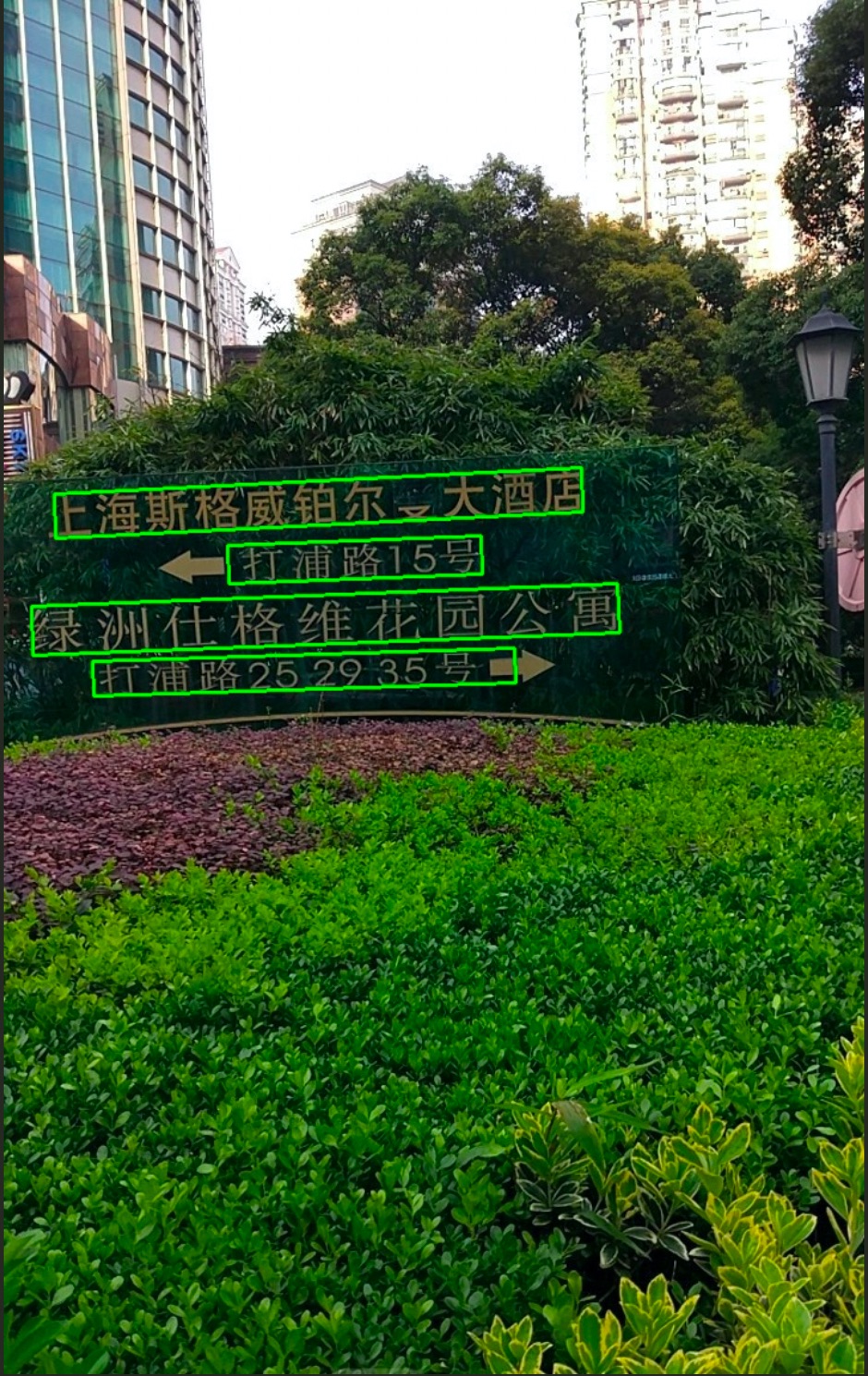

运行完成可视化结果如下图所示

diff --git a/examples/vision/ocr/PP-OCRv2/cpp/infer.cc b/examples/vision/ocr/PP-OCRv2/cpp/infer.cc

index 0248367cc..72a7fcf7e 100755

--- a/examples/vision/ocr/PP-OCRv2/cpp/infer.cc

+++ b/examples/vision/ocr/PP-OCRv2/cpp/infer.cc

@@ -55,10 +55,6 @@ void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model

auto cls_model = fastdeploy::vision::ocr::Classifier(cls_model_file, cls_params_file, cls_option);

auto rec_model = fastdeploy::vision::ocr::Recognizer(rec_model_file, rec_params_file, rec_label_file, rec_option);

- // Users could enable static shape infer for rec model when deploy PP-OCR on hardware

- // which can not support dynamic shape infer well, like Huawei Ascend series.

- // rec_model.GetPreprocessor().SetStaticShapeInfer(true);

-

assert(det_model.Initialized());

assert(cls_model.Initialized());

assert(rec_model.Initialized());

@@ -70,9 +66,6 @@ void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model

// Set inference batch size for cls model and rec model, the value could be -1 and 1 to positive infinity.

// When inference batch size is set to -1, it means that the inference batch size

// of the cls and rec models will be the same as the number of boxes detected by the det model.

- // When users enable static shape infer for rec model, the batch size of cls and rec model needs to be set to 1.

- // ppocr_v2.SetClsBatchSize(1);

- // ppocr_v2.SetRecBatchSize(1);

ppocr_v2.SetClsBatchSize(cls_batch_size);

ppocr_v2.SetRecBatchSize(rec_batch_size);

@@ -129,8 +122,6 @@ int main(int argc, char* argv[]) {

option.EnablePaddleToTrt();

} else if (flag == 4) {

option.UseKunlunXin();

- } else if (flag == 5) {

- option.UseAscend();

}

std::string det_model_dir = argv[1];

diff --git a/examples/vision/ocr/PP-OCRv2/cpp/infer_static_shape.cc b/examples/vision/ocr/PP-OCRv2/cpp/infer_static_shape.cc

new file mode 100755

index 000000000..ba5527a2e

--- /dev/null

+++ b/examples/vision/ocr/PP-OCRv2/cpp/infer_static_shape.cc

@@ -0,0 +1,107 @@

+// Copyright (c) 2022 PaddlePaddle Authors. All Rights Reserved.

+//

+// Licensed under the Apache License, Version 2.0 (the "License");

+// you may not use this file except in compliance with the License.

+// You may obtain a copy of the License at

+//

+// http://www.apache.org/licenses/LICENSE-2.0

+//

+// Unless required by applicable law or agreed to in writing, software

+// distributed under the License is distributed on an "AS IS" BASIS,

+// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+// See the License for the specific language governing permissions and

+// limitations under the License.

+

+#include "fastdeploy/vision.h"

+#ifdef WIN32

+const char sep = '\\';

+#else

+const char sep = '/';

+#endif

+

+void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model_dir, const std::string& rec_model_dir, const std::string& rec_label_file, const std::string& image_file, const fastdeploy::RuntimeOption& option) {

+ auto det_model_file = det_model_dir + sep + "inference.pdmodel";

+ auto det_params_file = det_model_dir + sep + "inference.pdiparams";

+

+ auto cls_model_file = cls_model_dir + sep + "inference.pdmodel";

+ auto cls_params_file = cls_model_dir + sep + "inference.pdiparams";

+

+ auto rec_model_file = rec_model_dir + sep + "inference.pdmodel";

+ auto rec_params_file = rec_model_dir + sep + "inference.pdiparams";

+

+ auto det_option = option;

+ auto cls_option = option;

+ auto rec_option = option;

+

+ auto det_model = fastdeploy::vision::ocr::DBDetector(det_model_file, det_params_file, det_option);

+ auto cls_model = fastdeploy::vision::ocr::Classifier(cls_model_file, cls_params_file, cls_option);

+ auto rec_model = fastdeploy::vision::ocr::Recognizer(rec_model_file, rec_params_file, rec_label_file, rec_option);

+

+ // Users could enable static shape infer for rec model when deploy PP-OCR on hardware

+ // which can not support dynamic shape infer well, like Huawei Ascend series.

+ rec_model.GetPreprocessor().SetStaticShapeInfer(true);

+

+ assert(det_model.Initialized());

+ assert(cls_model.Initialized());

+ assert(rec_model.Initialized());

+

+ // The classification model is optional, so the PP-OCR can also be connected in series as follows

+ // auto ppocr_v2 = fastdeploy::pipeline::PPOCRv2(&det_model, &rec_model);

+ auto ppocr_v2 = fastdeploy::pipeline::PPOCRv2(&det_model, &cls_model, &rec_model);

+

+ // When users enable static shape infer for rec model, the batch size of cls and rec model must to be set to 1.

+ ppocr_v2.SetClsBatchSize(1);

+ ppocr_v2.SetRecBatchSize(1);

+

+ if(!ppocr_v2.Initialized()){

+ std::cerr << "Failed to initialize PP-OCR." << std::endl;

+ return;

+ }

+

+ auto im = cv::imread(image_file);

+

+ fastdeploy::vision::OCRResult result;

+ if (!ppocr_v2.Predict(im, &result)) {

+ std::cerr << "Failed to predict." << std::endl;

+ return;

+ }

+

+ std::cout << result.Str() << std::endl;

+

+ auto vis_im = fastdeploy::vision::VisOcr(im, result);

+ cv::imwrite("vis_result.jpg", vis_im);

+ std::cout << "Visualized result saved in ./vis_result.jpg" << std::endl;

+}

+

+int main(int argc, char* argv[]) {

+ if (argc < 7) {

+ std::cout << "Usage: infer_demo path/to/det_model path/to/cls_model "

+ "path/to/rec_model path/to/rec_label_file path/to/image "

+ "run_option, "

+ "e.g ./infer_demo ./ch_PP-OCRv2_det_infer "

+ "./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv2_rec_infer "

+ "./ppocr_keys_v1.txt ./12.jpg 0"

+ << std::endl;

+ std::cout << "The data type of run_option is int, 0: run with cpu; 1: run "

+ "with ascend."

+ << std::endl;

+ return -1;

+ }

+

+ fastdeploy::RuntimeOption option;

+ int flag = std::atoi(argv[6]);

+

+ if (flag == 0) {

+ option.UseCpu();

+ } else if (flag == 1) {

+ option.UseAscend();

+ }

+

+ std::string det_model_dir = argv[1];

+ std::string cls_model_dir = argv[2];

+ std::string rec_model_dir = argv[3];

+ std::string rec_label_file = argv[4];

+ std::string test_image = argv[5];

+ InitAndInfer(det_model_dir, cls_model_dir, rec_model_dir, rec_label_file, test_image, option);

+ return 0;

+}

diff --git a/examples/vision/ocr/PP-OCRv2/python/README.md b/examples/vision/ocr/PP-OCRv2/python/README.md

index 270225ab7..1ea95695f 100755

--- a/examples/vision/ocr/PP-OCRv2/python/README.md

+++ b/examples/vision/ocr/PP-OCRv2/python/README.md

@@ -36,8 +36,8 @@ python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2

python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

# 昆仑芯XPU推理

python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device kunlunxin

-# 华为昇腾推理

-python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device ascend

+# 华为昇腾推理,需要使用静态shape脚本, 若用户需要连续地预测图片, 输入图片尺寸需要准备为统一尺寸

+python infer_static_shape.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device ascend

```

运行完成可视化结果如下图所示

diff --git a/examples/vision/ocr/PP-OCRv2/python/infer.py b/examples/vision/ocr/PP-OCRv2/python/infer.py

index f7373b4c2..6e8fe62b1 100755

--- a/examples/vision/ocr/PP-OCRv2/python/infer.py

+++ b/examples/vision/ocr/PP-OCRv2/python/infer.py

@@ -58,43 +58,113 @@ def parse_arguments():

type=int,

default=9,

help="Number of threads while inference on CPU.")

+ parser.add_argument(

+ "--cls_bs",

+ type=int,

+ default=1,

+ help="Classification model inference batch size.")

+ parser.add_argument(

+ "--rec_bs",

+ type=int,

+ default=6,

+ help="Recognition model inference batch size")

return parser.parse_args()

def build_option(args):

- option = fd.RuntimeOption()

- if args.device.lower() == "gpu":

- option.use_gpu(0)

- option.set_cpu_thread_num(args.cpu_thread_num)

+ det_option = fd.RuntimeOption()

+ cls_option = fd.RuntimeOption()

+ rec_option = fd.RuntimeOption()

+

+ det_option.set_cpu_thread_num(args.cpu_thread_num)

+ cls_option.set_cpu_thread_num(args.cpu_thread_num)

+ rec_option.set_cpu_thread_num(args.cpu_thread_num)

+

+ if args.device.lower() == "gpu":

+ det_option.use_gpu(args.device_id)

+ cls_option.use_gpu(args.device_id)

+ rec_option.use_gpu(args.device_id)

if args.device.lower() == "kunlunxin":

- option.use_kunlunxin()

- return option

+ det_option.use_kunlunxin()

+ cls_option.use_kunlunxin()

+ rec_option.use_kunlunxin()

- if args.device.lower() == "ascend":

- option.use_ascend()

- return option

+ return det_option, cls_option, rec_option

if args.backend.lower() == "trt":

assert args.device.lower(

) == "gpu", "TensorRT backend require inference on device GPU."

- option.use_trt_backend()

+ det_option.use_trt_backend()

+ cls_option.use_trt_backend()

+ rec_option.use_trt_backend()

+

+ # 设置trt input shape

+ # 如果用户想要自己改动检测模型的输入shape, 我们建议用户把检测模型的长和高设置为32的倍数.

+ det_option.set_trt_input_shape("x", [1, 3, 64, 64], [1, 3, 640, 640],

+ [1, 3, 960, 960])

+ cls_option.set_trt_input_shape("x", [1, 3, 48, 10],

+ [args.cls_bs, 3, 48, 320],

+ [args.cls_bs, 3, 48, 1024])

+ rec_option.set_trt_input_shape("x", [1, 3, 32, 10],

+ [args.rec_bs, 3, 32, 320],

+ [args.rec_bs, 3, 32, 2304])

+

+ # 用户可以把TRT引擎文件保存至本地

+ det_option.set_trt_cache_file(args.det_model + "/det_trt_cache.trt")

+ cls_option.set_trt_cache_file(args.cls_model + "/cls_trt_cache.trt")

+ rec_option.set_trt_cache_file(args.rec_model + "/rec_trt_cache.trt")

+

elif args.backend.lower() == "pptrt":

assert args.device.lower(

) == "gpu", "Paddle-TensorRT backend require inference on device GPU."

- option.use_trt_backend()

- option.enable_paddle_trt_collect_shape()

- option.enable_paddle_to_trt()

+ det_option.use_trt_backend()

+ det_option.enable_paddle_trt_collect_shape()

+ det_option.enable_paddle_to_trt()

+

+ cls_option.use_trt_backend()

+ cls_option.enable_paddle_trt_collect_shape()

+ cls_option.enable_paddle_to_trt()

+

+ rec_option.use_trt_backend()

+ rec_option.enable_paddle_trt_collect_shape()

+ rec_option.enable_paddle_to_trt()

+

+ # 设置trt input shape

+ # 如果用户想要自己改动检测模型的输入shape, 我们建议用户把检测模型的长和高设置为32的倍数.

+ det_option.set_trt_input_shape("x", [1, 3, 64, 64], [1, 3, 640, 640],

+ [1, 3, 960, 960])

+ cls_option.set_trt_input_shape("x", [1, 3, 48, 10],

+ [args.cls_bs, 3, 48, 320],

+ [args.cls_bs, 3, 48, 1024])

+ rec_option.set_trt_input_shape("x", [1, 3, 32, 10],

+ [args.rec_bs, 3, 32, 320],

+ [args.rec_bs, 3, 32, 2304])

+

+ # 用户可以把TRT引擎文件保存至本地

+ det_option.set_trt_cache_file(args.det_model)

+ cls_option.set_trt_cache_file(args.cls_model)

+ rec_option.set_trt_cache_file(args.rec_model)

+

elif args.backend.lower() == "ort":

- option.use_ort_backend()

+ det_option.use_ort_backend()

+ cls_option.use_ort_backend()

+ rec_option.use_ort_backend()

+

elif args.backend.lower() == "paddle":

- option.use_paddle_infer_backend()

+ det_option.use_paddle_infer_backend()

+ cls_option.use_paddle_infer_backend()

+ rec_option.use_paddle_infer_backend()

+

elif args.backend.lower() == "openvino":

assert args.device.lower(

) == "cpu", "OpenVINO backend require inference on device CPU."

- option.use_openvino_backend()

- return option

+ det_option.use_openvino_backend()

+ cls_option.use_openvino_backend()

+ rec_option.use_openvino_backend()

+

+ return det_option, cls_option, rec_option

args = parse_arguments()

@@ -111,49 +181,18 @@ rec_params_file = os.path.join(args.rec_model, "inference.pdiparams")

rec_label_file = args.rec_label_file

# 对于三个模型,均采用同样的部署配置

-# 用户也可根据自行需求分别配置

-runtime_option = build_option(args)

+# 用户也可根据自己的需求,个性化配置

+det_option, cls_option, rec_option = build_option(args)

-# PPOCR的cls和rec模型现在已经支持推理一个Batch的数据

-# 定义下面两个变量后, 可用于设置trt输入shape, 并在PPOCR模型初始化后, 完成Batch推理设置

-# 当用户要把PP-OCR部署在对动态shape推理支持有限的设备上时,(例如华为昇腾)

-# 需要把cls_batch_size和rec_batch_size都设置为1.

-cls_batch_size = 1

-rec_batch_size = 6

-

-# 当使用TRT时,分别给三个模型的runtime设置动态shape,并完成模型的创建.

-# 注意: 需要在检测模型创建完成后,再设置分类模型的动态输入并创建分类模型, 识别模型同理.

-# 如果用户想要自己改动检测模型的输入shape, 我们建议用户把检测模型的长和高设置为32的倍数.

-det_option = runtime_option

-det_option.set_trt_input_shape("x", [1, 3, 64, 64], [1, 3, 640, 640],

- [1, 3, 960, 960])

-# 用户可以把TRT引擎文件保存至本地

-# det_option.set_trt_cache_file(args.det_model + "/det_trt_cache.trt")

det_model = fd.vision.ocr.DBDetector(

det_model_file, det_params_file, runtime_option=det_option)

-cls_option = runtime_option

-cls_option.set_trt_input_shape("x", [1, 3, 48, 10],

- [cls_batch_size, 3, 48, 320],

- [cls_batch_size, 3, 48, 1024])

-# 用户可以把TRT引擎文件保存至本地

-# cls_option.set_trt_cache_file(args.cls_model + "/cls_trt_cache.trt")

cls_model = fd.vision.ocr.Classifier(

cls_model_file, cls_params_file, runtime_option=cls_option)

-rec_option = runtime_option

-rec_option.set_trt_input_shape("x", [1, 3, 32, 10],

- [rec_batch_size, 3, 32, 320],

- [rec_batch_size, 3, 32, 2304])

-# 用户可以把TRT引擎文件保存至本地

-# rec_option.set_trt_cache_file(args.rec_model + "/rec_trt_cache.trt")

rec_model = fd.vision.ocr.Recognizer(

rec_model_file, rec_params_file, rec_label_file, runtime_option=rec_option)

-# 当用户要把PP-OCR部署在对动态shape推理支持有限的设备上时,(例如华为昇腾)

-# 需要使用下行代码, 来启用rec模型的静态shape推理.

-# rec_model.preprocessor.static_shape_infer = True

-

# 创建PP-OCR,串联3个模型,其中cls_model可选,如无需求,可设置为None

ppocr_v2 = fd.vision.ocr.PPOCRv2(

det_model=det_model, cls_model=cls_model, rec_model=rec_model)

@@ -161,8 +200,8 @@ ppocr_v2 = fd.vision.ocr.PPOCRv2(

# 给cls和rec模型设置推理时的batch size

# 此值能为-1, 和1到正无穷

# 当此值为-1时, cls和rec模型的batch size将默认和det模型检测出的框的数量相同

-ppocr_v2.cls_batch_size = cls_batch_size

-ppocr_v2.rec_batch_size = rec_batch_size

+ppocr_v2.cls_batch_size = args.cls_bs

+ppocr_v2.rec_batch_size = args.rec_bs

# 预测图片准备

im = cv2.imread(args.image)

diff --git a/examples/vision/ocr/PP-OCRv2/python/infer_static_shape.py b/examples/vision/ocr/PP-OCRv2/python/infer_static_shape.py

new file mode 100755

index 000000000..29055fdaa

--- /dev/null

+++ b/examples/vision/ocr/PP-OCRv2/python/infer_static_shape.py

@@ -0,0 +1,114 @@

+# Copyright (c) 2022 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+import fastdeploy as fd

+import cv2

+import os

+

+

+def parse_arguments():

+ import argparse

+ import ast

+ parser = argparse.ArgumentParser()

+ parser.add_argument(

+ "--det_model", required=True, help="Path of Detection model of PPOCR.")

+ parser.add_argument(

+ "--cls_model",

+ required=True,

+ help="Path of Classification model of PPOCR.")

+ parser.add_argument(

+ "--rec_model",

+ required=True,

+ help="Path of Recognization model of PPOCR.")

+ parser.add_argument(

+ "--rec_label_file",

+ required=True,

+ help="Path of Recognization model of PPOCR.")

+ parser.add_argument(

+ "--image", type=str, required=True, help="Path of test image file.")

+ parser.add_argument(

+ "--device",

+ type=str,

+ default='cpu',

+ help="Type of inference device, support 'cpu', 'kunlunxin' or 'gpu'.")

+ parser.add_argument(

+ "--cpu_thread_num",

+ type=int,

+ default=9,

+ help="Number of threads while inference on CPU.")

+ return parser.parse_args()

+

+

+def build_option(args):

+

+ det_option = fd.RuntimeOption()

+ cls_option = fd.RuntimeOption()

+ rec_option = fd.RuntimeOption()

+

+ # 当前需要对PP-OCR启用静态shape推理的硬件只有昇腾.

+ if args.device.lower() == "ascend":

+ det_option.use_ascend()

+ cls_option.use_ascend()

+ rec_option.use_ascend()

+

+ return det_option, cls_option, rec_option

+

+

+args = parse_arguments()

+

+# Detection模型, 检测文字框

+det_model_file = os.path.join(args.det_model, "inference.pdmodel")

+det_params_file = os.path.join(args.det_model, "inference.pdiparams")

+# Classification模型,方向分类,可选

+cls_model_file = os.path.join(args.cls_model, "inference.pdmodel")

+cls_params_file = os.path.join(args.cls_model, "inference.pdiparams")

+# Recognition模型,文字识别模型

+rec_model_file = os.path.join(args.rec_model, "inference.pdmodel")

+rec_params_file = os.path.join(args.rec_model, "inference.pdiparams")

+rec_label_file = args.rec_label_file

+

+det_option, cls_option, rec_option = build_option(args)

+

+det_model = fd.vision.ocr.DBDetector(

+ det_model_file, det_params_file, runtime_option=det_option)

+

+cls_model = fd.vision.ocr.Classifier(

+ cls_model_file, cls_params_file, runtime_option=cls_option)

+

+rec_model = fd.vision.ocr.Recognizer(

+ rec_model_file, rec_params_file, rec_label_file, runtime_option=rec_option)

+

+# Rec模型启用静态shape推理

+rec_model.preprocessor.static_shape_infer = True

+

+# 创建PP-OCR,串联3个模型,其中cls_model可选,如无需求,可设置为None

+ppocr_v2 = fd.vision.ocr.PPOCRv2(

+ det_model=det_model, cls_model=cls_model, rec_model=rec_model)

+

+# Cls模型和Rec模型的batch size 必须设置为1, 开启静态shape推理

+ppocr_v2.cls_batch_size = 1

+ppocr_v2.rec_batch_size = 1

+

+# 预测图片准备

+im = cv2.imread(args.image)

+

+#预测并打印结果

+result = ppocr_v2.predict(im)

+

+print(result)

+

+# 可视化结果

+vis_im = fd.vision.vis_ppocr(im, result)

+cv2.imwrite("visualized_result.jpg", vis_im)

+print("Visualized result save in ./visualized_result.jpg")

diff --git a/examples/vision/ocr/PP-OCRv3/cpp/CMakeLists.txt b/examples/vision/ocr/PP-OCRv3/cpp/CMakeLists.txt

index 93540a7e8..8b2f7aa61 100644

--- a/examples/vision/ocr/PP-OCRv3/cpp/CMakeLists.txt

+++ b/examples/vision/ocr/PP-OCRv3/cpp/CMakeLists.txt

@@ -12,3 +12,7 @@ include_directories(${FASTDEPLOY_INCS})

add_executable(infer_demo ${PROJECT_SOURCE_DIR}/infer.cc)

# 添加FastDeploy库依赖

target_link_libraries(infer_demo ${FASTDEPLOY_LIBS})

+

+add_executable(infer_static_shape_demo ${PROJECT_SOURCE_DIR}/infer_static_shape.cc)

+# 添加FastDeploy库依赖

+target_link_libraries(infer_static_shape_demo ${FASTDEPLOY_LIBS})

diff --git a/examples/vision/ocr/PP-OCRv3/cpp/README.md b/examples/vision/ocr/PP-OCRv3/cpp/README.md

index 6f48a69ac..7f557a213 100755

--- a/examples/vision/ocr/PP-OCRv3/cpp/README.md

+++ b/examples/vision/ocr/PP-OCRv3/cpp/README.md

@@ -43,13 +43,16 @@ wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_

./infer_demo ./ch_PP-OCRv3_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer ./ppocr_keys_v1.txt ./12.jpg 3

# 昆仑芯XPU推理

./infer_demo ./ch_PP-OCRv3_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer ./ppocr_keys_v1.txt ./12.jpg 4

-# 华为昇腾推理, 请用户在代码里正确开启Rec模型的静态shape推理,并设置分类模型和识别模型的推理batch size为1.

-./infer_demo ./ch_PP-OCRv3_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer ./ppocr_keys_v1.txt ./12.jpg 5

+# 华为昇腾推理,需要使用静态shape的demo, 若用户需要连续地预测图片, 输入图片尺寸需要准备为统一尺寸

+./infer_static_shape_demo ./ch_PP-OCRv3_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer ./ppocr_keys_v1.txt ./12.jpg 1

```

以上命令只适用于Linux或MacOS, Windows下SDK的使用方式请参考:

- [如何在Windows中使用FastDeploy C++ SDK](../../../../../docs/cn/faq/use_sdk_on_windows.md)

+如果用户使用华为昇腾NPU部署, 请参考以下方式在部署前初始化部署环境:

+- [如何使用华为昇腾NPU部署](../../../../../docs/cn/faq/use_sdk_on_ascend.md)

+

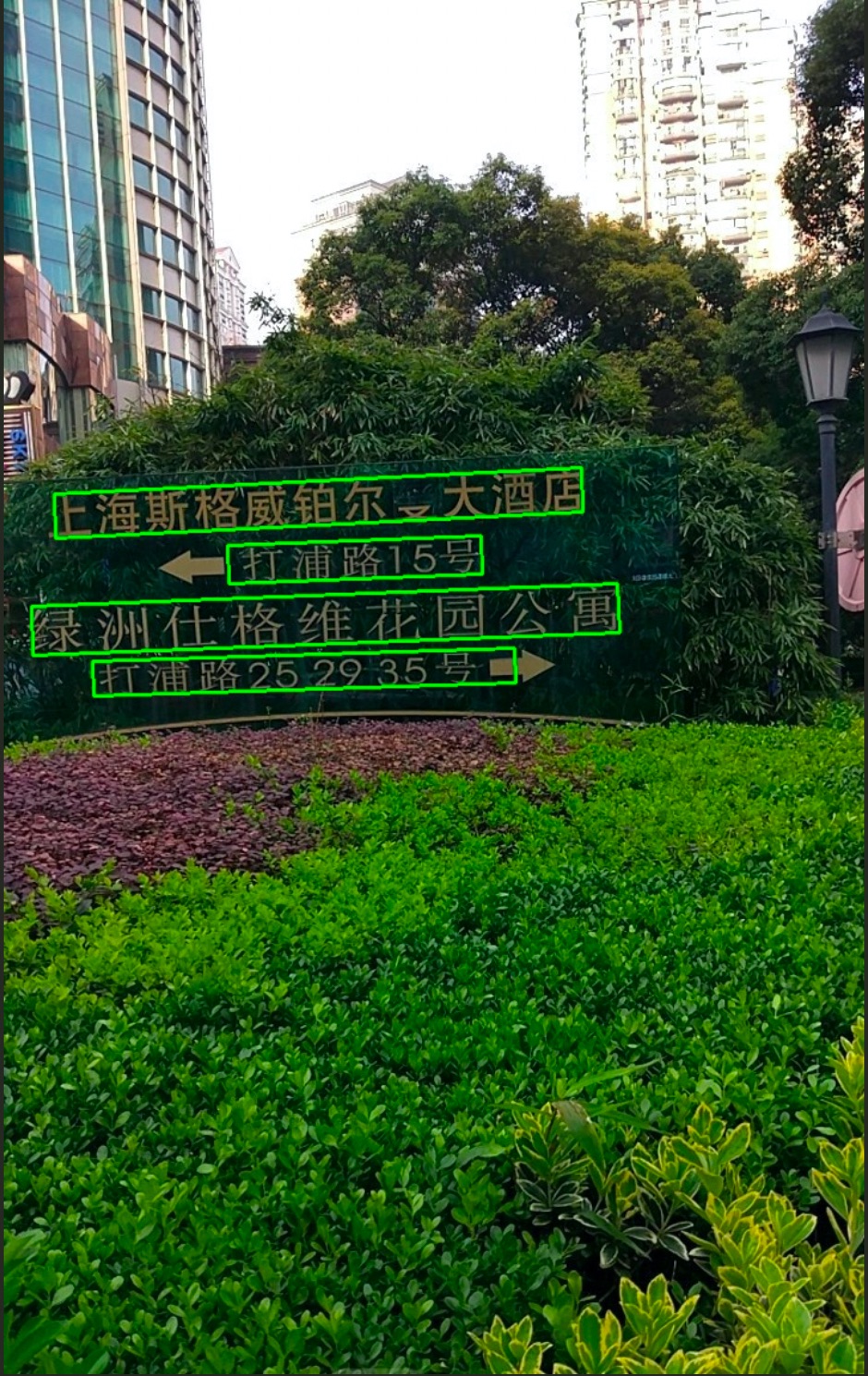

运行完成可视化结果如下图所示

diff --git a/examples/vision/ocr/PP-OCRv2/cpp/infer.cc b/examples/vision/ocr/PP-OCRv2/cpp/infer.cc

index 0248367cc..72a7fcf7e 100755

--- a/examples/vision/ocr/PP-OCRv2/cpp/infer.cc

+++ b/examples/vision/ocr/PP-OCRv2/cpp/infer.cc

@@ -55,10 +55,6 @@ void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model

auto cls_model = fastdeploy::vision::ocr::Classifier(cls_model_file, cls_params_file, cls_option);

auto rec_model = fastdeploy::vision::ocr::Recognizer(rec_model_file, rec_params_file, rec_label_file, rec_option);

- // Users could enable static shape infer for rec model when deploy PP-OCR on hardware

- // which can not support dynamic shape infer well, like Huawei Ascend series.

- // rec_model.GetPreprocessor().SetStaticShapeInfer(true);

-

assert(det_model.Initialized());

assert(cls_model.Initialized());

assert(rec_model.Initialized());

@@ -70,9 +66,6 @@ void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model

// Set inference batch size for cls model and rec model, the value could be -1 and 1 to positive infinity.

// When inference batch size is set to -1, it means that the inference batch size

// of the cls and rec models will be the same as the number of boxes detected by the det model.

- // When users enable static shape infer for rec model, the batch size of cls and rec model needs to be set to 1.

- // ppocr_v2.SetClsBatchSize(1);

- // ppocr_v2.SetRecBatchSize(1);

ppocr_v2.SetClsBatchSize(cls_batch_size);

ppocr_v2.SetRecBatchSize(rec_batch_size);

@@ -129,8 +122,6 @@ int main(int argc, char* argv[]) {

option.EnablePaddleToTrt();

} else if (flag == 4) {

option.UseKunlunXin();

- } else if (flag == 5) {

- option.UseAscend();

}

std::string det_model_dir = argv[1];

diff --git a/examples/vision/ocr/PP-OCRv2/cpp/infer_static_shape.cc b/examples/vision/ocr/PP-OCRv2/cpp/infer_static_shape.cc

new file mode 100755

index 000000000..ba5527a2e

--- /dev/null

+++ b/examples/vision/ocr/PP-OCRv2/cpp/infer_static_shape.cc

@@ -0,0 +1,107 @@

+// Copyright (c) 2022 PaddlePaddle Authors. All Rights Reserved.

+//

+// Licensed under the Apache License, Version 2.0 (the "License");

+// you may not use this file except in compliance with the License.

+// You may obtain a copy of the License at

+//

+// http://www.apache.org/licenses/LICENSE-2.0

+//

+// Unless required by applicable law or agreed to in writing, software

+// distributed under the License is distributed on an "AS IS" BASIS,

+// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+// See the License for the specific language governing permissions and

+// limitations under the License.

+

+#include "fastdeploy/vision.h"

+#ifdef WIN32

+const char sep = '\\';

+#else

+const char sep = '/';

+#endif

+

+void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model_dir, const std::string& rec_model_dir, const std::string& rec_label_file, const std::string& image_file, const fastdeploy::RuntimeOption& option) {

+ auto det_model_file = det_model_dir + sep + "inference.pdmodel";

+ auto det_params_file = det_model_dir + sep + "inference.pdiparams";

+

+ auto cls_model_file = cls_model_dir + sep + "inference.pdmodel";

+ auto cls_params_file = cls_model_dir + sep + "inference.pdiparams";

+

+ auto rec_model_file = rec_model_dir + sep + "inference.pdmodel";

+ auto rec_params_file = rec_model_dir + sep + "inference.pdiparams";

+

+ auto det_option = option;

+ auto cls_option = option;

+ auto rec_option = option;

+

+ auto det_model = fastdeploy::vision::ocr::DBDetector(det_model_file, det_params_file, det_option);

+ auto cls_model = fastdeploy::vision::ocr::Classifier(cls_model_file, cls_params_file, cls_option);

+ auto rec_model = fastdeploy::vision::ocr::Recognizer(rec_model_file, rec_params_file, rec_label_file, rec_option);

+

+ // Users could enable static shape infer for rec model when deploy PP-OCR on hardware

+ // which can not support dynamic shape infer well, like Huawei Ascend series.

+ rec_model.GetPreprocessor().SetStaticShapeInfer(true);

+

+ assert(det_model.Initialized());

+ assert(cls_model.Initialized());

+ assert(rec_model.Initialized());

+

+ // The classification model is optional, so the PP-OCR can also be connected in series as follows

+ // auto ppocr_v2 = fastdeploy::pipeline::PPOCRv2(&det_model, &rec_model);

+ auto ppocr_v2 = fastdeploy::pipeline::PPOCRv2(&det_model, &cls_model, &rec_model);

+

+ // When users enable static shape infer for rec model, the batch size of cls and rec model must to be set to 1.

+ ppocr_v2.SetClsBatchSize(1);

+ ppocr_v2.SetRecBatchSize(1);

+

+ if(!ppocr_v2.Initialized()){

+ std::cerr << "Failed to initialize PP-OCR." << std::endl;

+ return;

+ }

+

+ auto im = cv::imread(image_file);

+

+ fastdeploy::vision::OCRResult result;

+ if (!ppocr_v2.Predict(im, &result)) {

+ std::cerr << "Failed to predict." << std::endl;

+ return;

+ }

+

+ std::cout << result.Str() << std::endl;

+

+ auto vis_im = fastdeploy::vision::VisOcr(im, result);

+ cv::imwrite("vis_result.jpg", vis_im);

+ std::cout << "Visualized result saved in ./vis_result.jpg" << std::endl;

+}

+

+int main(int argc, char* argv[]) {

+ if (argc < 7) {

+ std::cout << "Usage: infer_demo path/to/det_model path/to/cls_model "

+ "path/to/rec_model path/to/rec_label_file path/to/image "

+ "run_option, "

+ "e.g ./infer_demo ./ch_PP-OCRv2_det_infer "

+ "./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv2_rec_infer "

+ "./ppocr_keys_v1.txt ./12.jpg 0"

+ << std::endl;

+ std::cout << "The data type of run_option is int, 0: run with cpu; 1: run "

+ "with ascend."

+ << std::endl;

+ return -1;

+ }

+

+ fastdeploy::RuntimeOption option;

+ int flag = std::atoi(argv[6]);

+

+ if (flag == 0) {

+ option.UseCpu();

+ } else if (flag == 1) {

+ option.UseAscend();

+ }

+

+ std::string det_model_dir = argv[1];

+ std::string cls_model_dir = argv[2];

+ std::string rec_model_dir = argv[3];

+ std::string rec_label_file = argv[4];

+ std::string test_image = argv[5];

+ InitAndInfer(det_model_dir, cls_model_dir, rec_model_dir, rec_label_file, test_image, option);

+ return 0;

+}

diff --git a/examples/vision/ocr/PP-OCRv2/python/README.md b/examples/vision/ocr/PP-OCRv2/python/README.md

index 270225ab7..1ea95695f 100755

--- a/examples/vision/ocr/PP-OCRv2/python/README.md

+++ b/examples/vision/ocr/PP-OCRv2/python/README.md

@@ -36,8 +36,8 @@ python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2

python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

# 昆仑芯XPU推理

python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device kunlunxin

-# 华为昇腾推理

-python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device ascend

+# 华为昇腾推理,需要使用静态shape脚本, 若用户需要连续地预测图片, 输入图片尺寸需要准备为统一尺寸

+python infer_static_shape.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device ascend

```

运行完成可视化结果如下图所示

diff --git a/examples/vision/ocr/PP-OCRv2/python/infer.py b/examples/vision/ocr/PP-OCRv2/python/infer.py

index f7373b4c2..6e8fe62b1 100755

--- a/examples/vision/ocr/PP-OCRv2/python/infer.py

+++ b/examples/vision/ocr/PP-OCRv2/python/infer.py

@@ -58,43 +58,113 @@ def parse_arguments():

type=int,

default=9,

help="Number of threads while inference on CPU.")

+ parser.add_argument(

+ "--cls_bs",

+ type=int,

+ default=1,

+ help="Classification model inference batch size.")

+ parser.add_argument(

+ "--rec_bs",

+ type=int,

+ default=6,

+ help="Recognition model inference batch size")

return parser.parse_args()

def build_option(args):

- option = fd.RuntimeOption()

- if args.device.lower() == "gpu":

- option.use_gpu(0)

- option.set_cpu_thread_num(args.cpu_thread_num)

+ det_option = fd.RuntimeOption()

+ cls_option = fd.RuntimeOption()

+ rec_option = fd.RuntimeOption()

+

+ det_option.set_cpu_thread_num(args.cpu_thread_num)

+ cls_option.set_cpu_thread_num(args.cpu_thread_num)

+ rec_option.set_cpu_thread_num(args.cpu_thread_num)

+

+ if args.device.lower() == "gpu":

+ det_option.use_gpu(args.device_id)

+ cls_option.use_gpu(args.device_id)

+ rec_option.use_gpu(args.device_id)

if args.device.lower() == "kunlunxin":

- option.use_kunlunxin()

- return option

+ det_option.use_kunlunxin()

+ cls_option.use_kunlunxin()

+ rec_option.use_kunlunxin()

- if args.device.lower() == "ascend":

- option.use_ascend()

- return option

+ return det_option, cls_option, rec_option

if args.backend.lower() == "trt":

assert args.device.lower(

) == "gpu", "TensorRT backend require inference on device GPU."

- option.use_trt_backend()

+ det_option.use_trt_backend()

+ cls_option.use_trt_backend()

+ rec_option.use_trt_backend()

+

+ # 设置trt input shape

+ # 如果用户想要自己改动检测模型的输入shape, 我们建议用户把检测模型的长和高设置为32的倍数.

+ det_option.set_trt_input_shape("x", [1, 3, 64, 64], [1, 3, 640, 640],

+ [1, 3, 960, 960])

+ cls_option.set_trt_input_shape("x", [1, 3, 48, 10],

+ [args.cls_bs, 3, 48, 320],

+ [args.cls_bs, 3, 48, 1024])

+ rec_option.set_trt_input_shape("x", [1, 3, 32, 10],

+ [args.rec_bs, 3, 32, 320],

+ [args.rec_bs, 3, 32, 2304])

+

+ # 用户可以把TRT引擎文件保存至本地

+ det_option.set_trt_cache_file(args.det_model + "/det_trt_cache.trt")

+ cls_option.set_trt_cache_file(args.cls_model + "/cls_trt_cache.trt")

+ rec_option.set_trt_cache_file(args.rec_model + "/rec_trt_cache.trt")

+

elif args.backend.lower() == "pptrt":

assert args.device.lower(

) == "gpu", "Paddle-TensorRT backend require inference on device GPU."

- option.use_trt_backend()

- option.enable_paddle_trt_collect_shape()

- option.enable_paddle_to_trt()

+ det_option.use_trt_backend()

+ det_option.enable_paddle_trt_collect_shape()

+ det_option.enable_paddle_to_trt()

+

+ cls_option.use_trt_backend()

+ cls_option.enable_paddle_trt_collect_shape()

+ cls_option.enable_paddle_to_trt()

+

+ rec_option.use_trt_backend()

+ rec_option.enable_paddle_trt_collect_shape()

+ rec_option.enable_paddle_to_trt()

+

+ # 设置trt input shape

+ # 如果用户想要自己改动检测模型的输入shape, 我们建议用户把检测模型的长和高设置为32的倍数.

+ det_option.set_trt_input_shape("x", [1, 3, 64, 64], [1, 3, 640, 640],

+ [1, 3, 960, 960])

+ cls_option.set_trt_input_shape("x", [1, 3, 48, 10],

+ [args.cls_bs, 3, 48, 320],

+ [args.cls_bs, 3, 48, 1024])

+ rec_option.set_trt_input_shape("x", [1, 3, 32, 10],

+ [args.rec_bs, 3, 32, 320],

+ [args.rec_bs, 3, 32, 2304])

+

+ # 用户可以把TRT引擎文件保存至本地

+ det_option.set_trt_cache_file(args.det_model)

+ cls_option.set_trt_cache_file(args.cls_model)

+ rec_option.set_trt_cache_file(args.rec_model)

+

elif args.backend.lower() == "ort":

- option.use_ort_backend()

+ det_option.use_ort_backend()

+ cls_option.use_ort_backend()

+ rec_option.use_ort_backend()

+

elif args.backend.lower() == "paddle":

- option.use_paddle_infer_backend()

+ det_option.use_paddle_infer_backend()

+ cls_option.use_paddle_infer_backend()

+ rec_option.use_paddle_infer_backend()

+

elif args.backend.lower() == "openvino":

assert args.device.lower(

) == "cpu", "OpenVINO backend require inference on device CPU."

- option.use_openvino_backend()

- return option

+ det_option.use_openvino_backend()

+ cls_option.use_openvino_backend()

+ rec_option.use_openvino_backend()

+

+ return det_option, cls_option, rec_option

args = parse_arguments()

@@ -111,49 +181,18 @@ rec_params_file = os.path.join(args.rec_model, "inference.pdiparams")

rec_label_file = args.rec_label_file

# 对于三个模型,均采用同样的部署配置

-# 用户也可根据自行需求分别配置

-runtime_option = build_option(args)

+# 用户也可根据自己的需求,个性化配置

+det_option, cls_option, rec_option = build_option(args)

-# PPOCR的cls和rec模型现在已经支持推理一个Batch的数据

-# 定义下面两个变量后, 可用于设置trt输入shape, 并在PPOCR模型初始化后, 完成Batch推理设置

-# 当用户要把PP-OCR部署在对动态shape推理支持有限的设备上时,(例如华为昇腾)

-# 需要把cls_batch_size和rec_batch_size都设置为1.

-cls_batch_size = 1

-rec_batch_size = 6

-

-# 当使用TRT时,分别给三个模型的runtime设置动态shape,并完成模型的创建.

-# 注意: 需要在检测模型创建完成后,再设置分类模型的动态输入并创建分类模型, 识别模型同理.

-# 如果用户想要自己改动检测模型的输入shape, 我们建议用户把检测模型的长和高设置为32的倍数.

-det_option = runtime_option

-det_option.set_trt_input_shape("x", [1, 3, 64, 64], [1, 3, 640, 640],

- [1, 3, 960, 960])

-# 用户可以把TRT引擎文件保存至本地

-# det_option.set_trt_cache_file(args.det_model + "/det_trt_cache.trt")

det_model = fd.vision.ocr.DBDetector(

det_model_file, det_params_file, runtime_option=det_option)

-cls_option = runtime_option

-cls_option.set_trt_input_shape("x", [1, 3, 48, 10],

- [cls_batch_size, 3, 48, 320],

- [cls_batch_size, 3, 48, 1024])

-# 用户可以把TRT引擎文件保存至本地

-# cls_option.set_trt_cache_file(args.cls_model + "/cls_trt_cache.trt")

cls_model = fd.vision.ocr.Classifier(

cls_model_file, cls_params_file, runtime_option=cls_option)

-rec_option = runtime_option

-rec_option.set_trt_input_shape("x", [1, 3, 32, 10],

- [rec_batch_size, 3, 32, 320],

- [rec_batch_size, 3, 32, 2304])

-# 用户可以把TRT引擎文件保存至本地

-# rec_option.set_trt_cache_file(args.rec_model + "/rec_trt_cache.trt")

rec_model = fd.vision.ocr.Recognizer(

rec_model_file, rec_params_file, rec_label_file, runtime_option=rec_option)

-# 当用户要把PP-OCR部署在对动态shape推理支持有限的设备上时,(例如华为昇腾)

-# 需要使用下行代码, 来启用rec模型的静态shape推理.

-# rec_model.preprocessor.static_shape_infer = True

-

# 创建PP-OCR,串联3个模型,其中cls_model可选,如无需求,可设置为None

ppocr_v2 = fd.vision.ocr.PPOCRv2(

det_model=det_model, cls_model=cls_model, rec_model=rec_model)

@@ -161,8 +200,8 @@ ppocr_v2 = fd.vision.ocr.PPOCRv2(

# 给cls和rec模型设置推理时的batch size

# 此值能为-1, 和1到正无穷

# 当此值为-1时, cls和rec模型的batch size将默认和det模型检测出的框的数量相同

-ppocr_v2.cls_batch_size = cls_batch_size

-ppocr_v2.rec_batch_size = rec_batch_size

+ppocr_v2.cls_batch_size = args.cls_bs

+ppocr_v2.rec_batch_size = args.rec_bs

# 预测图片准备

im = cv2.imread(args.image)

diff --git a/examples/vision/ocr/PP-OCRv2/python/infer_static_shape.py b/examples/vision/ocr/PP-OCRv2/python/infer_static_shape.py

new file mode 100755

index 000000000..29055fdaa

--- /dev/null

+++ b/examples/vision/ocr/PP-OCRv2/python/infer_static_shape.py

@@ -0,0 +1,114 @@

+# Copyright (c) 2022 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+import fastdeploy as fd

+import cv2

+import os

+

+

+def parse_arguments():

+ import argparse

+ import ast

+ parser = argparse.ArgumentParser()

+ parser.add_argument(

+ "--det_model", required=True, help="Path of Detection model of PPOCR.")

+ parser.add_argument(

+ "--cls_model",

+ required=True,

+ help="Path of Classification model of PPOCR.")

+ parser.add_argument(

+ "--rec_model",

+ required=True,

+ help="Path of Recognization model of PPOCR.")

+ parser.add_argument(

+ "--rec_label_file",

+ required=True,

+ help="Path of Recognization model of PPOCR.")

+ parser.add_argument(

+ "--image", type=str, required=True, help="Path of test image file.")

+ parser.add_argument(

+ "--device",

+ type=str,

+ default='cpu',

+ help="Type of inference device, support 'cpu', 'kunlunxin' or 'gpu'.")

+ parser.add_argument(

+ "--cpu_thread_num",

+ type=int,

+ default=9,

+ help="Number of threads while inference on CPU.")

+ return parser.parse_args()

+

+

+def build_option(args):

+

+ det_option = fd.RuntimeOption()

+ cls_option = fd.RuntimeOption()

+ rec_option = fd.RuntimeOption()

+

+ # 当前需要对PP-OCR启用静态shape推理的硬件只有昇腾.

+ if args.device.lower() == "ascend":

+ det_option.use_ascend()

+ cls_option.use_ascend()

+ rec_option.use_ascend()

+

+ return det_option, cls_option, rec_option

+

+

+args = parse_arguments()

+

+# Detection模型, 检测文字框

+det_model_file = os.path.join(args.det_model, "inference.pdmodel")

+det_params_file = os.path.join(args.det_model, "inference.pdiparams")

+# Classification模型,方向分类,可选

+cls_model_file = os.path.join(args.cls_model, "inference.pdmodel")

+cls_params_file = os.path.join(args.cls_model, "inference.pdiparams")

+# Recognition模型,文字识别模型

+rec_model_file = os.path.join(args.rec_model, "inference.pdmodel")

+rec_params_file = os.path.join(args.rec_model, "inference.pdiparams")

+rec_label_file = args.rec_label_file

+

+det_option, cls_option, rec_option = build_option(args)

+

+det_model = fd.vision.ocr.DBDetector(

+ det_model_file, det_params_file, runtime_option=det_option)

+

+cls_model = fd.vision.ocr.Classifier(

+ cls_model_file, cls_params_file, runtime_option=cls_option)

+

+rec_model = fd.vision.ocr.Recognizer(

+ rec_model_file, rec_params_file, rec_label_file, runtime_option=rec_option)

+

+# Rec模型启用静态shape推理

+rec_model.preprocessor.static_shape_infer = True

+

+# 创建PP-OCR,串联3个模型,其中cls_model可选,如无需求,可设置为None

+ppocr_v2 = fd.vision.ocr.PPOCRv2(

+ det_model=det_model, cls_model=cls_model, rec_model=rec_model)

+

+# Cls模型和Rec模型的batch size 必须设置为1, 开启静态shape推理

+ppocr_v2.cls_batch_size = 1

+ppocr_v2.rec_batch_size = 1

+

+# 预测图片准备

+im = cv2.imread(args.image)

+

+#预测并打印结果

+result = ppocr_v2.predict(im)

+

+print(result)

+

+# 可视化结果

+vis_im = fd.vision.vis_ppocr(im, result)

+cv2.imwrite("visualized_result.jpg", vis_im)

+print("Visualized result save in ./visualized_result.jpg")

diff --git a/examples/vision/ocr/PP-OCRv3/cpp/CMakeLists.txt b/examples/vision/ocr/PP-OCRv3/cpp/CMakeLists.txt

index 93540a7e8..8b2f7aa61 100644

--- a/examples/vision/ocr/PP-OCRv3/cpp/CMakeLists.txt

+++ b/examples/vision/ocr/PP-OCRv3/cpp/CMakeLists.txt

@@ -12,3 +12,7 @@ include_directories(${FASTDEPLOY_INCS})

add_executable(infer_demo ${PROJECT_SOURCE_DIR}/infer.cc)

# 添加FastDeploy库依赖

target_link_libraries(infer_demo ${FASTDEPLOY_LIBS})

+

+add_executable(infer_static_shape_demo ${PROJECT_SOURCE_DIR}/infer_static_shape.cc)

+# 添加FastDeploy库依赖

+target_link_libraries(infer_static_shape_demo ${FASTDEPLOY_LIBS})

diff --git a/examples/vision/ocr/PP-OCRv3/cpp/README.md b/examples/vision/ocr/PP-OCRv3/cpp/README.md

index 6f48a69ac..7f557a213 100755

--- a/examples/vision/ocr/PP-OCRv3/cpp/README.md

+++ b/examples/vision/ocr/PP-OCRv3/cpp/README.md

@@ -43,13 +43,16 @@ wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_

./infer_demo ./ch_PP-OCRv3_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer ./ppocr_keys_v1.txt ./12.jpg 3

# 昆仑芯XPU推理

./infer_demo ./ch_PP-OCRv3_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer ./ppocr_keys_v1.txt ./12.jpg 4

-# 华为昇腾推理, 请用户在代码里正确开启Rec模型的静态shape推理,并设置分类模型和识别模型的推理batch size为1.

-./infer_demo ./ch_PP-OCRv3_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer ./ppocr_keys_v1.txt ./12.jpg 5

+# 华为昇腾推理,需要使用静态shape的demo, 若用户需要连续地预测图片, 输入图片尺寸需要准备为统一尺寸

+./infer_static_shape_demo ./ch_PP-OCRv3_det_infer ./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer ./ppocr_keys_v1.txt ./12.jpg 1

```

以上命令只适用于Linux或MacOS, Windows下SDK的使用方式请参考:

- [如何在Windows中使用FastDeploy C++ SDK](../../../../../docs/cn/faq/use_sdk_on_windows.md)

+如果用户使用华为昇腾NPU部署, 请参考以下方式在部署前初始化部署环境:

+- [如何使用华为昇腾NPU部署](../../../../../docs/cn/faq/use_sdk_on_ascend.md)

+

运行完成可视化结果如下图所示

diff --git a/examples/vision/ocr/PP-OCRv3/cpp/infer.cc b/examples/vision/ocr/PP-OCRv3/cpp/infer.cc

index 7fbcf835e..3b35c1d44 100755

--- a/examples/vision/ocr/PP-OCRv3/cpp/infer.cc

+++ b/examples/vision/ocr/PP-OCRv3/cpp/infer.cc

@@ -56,10 +56,6 @@ void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model

auto cls_model = fastdeploy::vision::ocr::Classifier(cls_model_file, cls_params_file, cls_option);

auto rec_model = fastdeploy::vision::ocr::Recognizer(rec_model_file, rec_params_file, rec_label_file, rec_option);

- // Users could enable static shape infer for rec model when deploy PP-OCR on hardware

- // which can not support dynamic shape infer well, like Huawei Ascend series.

- // rec_model.GetPreprocessor().SetStaticShapeInfer(true);

-

assert(det_model.Initialized());

assert(cls_model.Initialized());

assert(rec_model.Initialized());

@@ -71,9 +67,6 @@ void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model

// Set inference batch size for cls model and rec model, the value could be -1 and 1 to positive infinity.

// When inference batch size is set to -1, it means that the inference batch size

// of the cls and rec models will be the same as the number of boxes detected by the det model.

- // When users enable static shape infer for rec model, the batch size of cls and rec model needs to be set to 1.

- // ppocr_v3.SetClsBatchSize(1);

- // ppocr_v3.SetRecBatchSize(1);

ppocr_v3.SetClsBatchSize(cls_batch_size);

ppocr_v3.SetRecBatchSize(rec_batch_size);

@@ -130,8 +123,6 @@ int main(int argc, char* argv[]) {

option.EnablePaddleToTrt();

} else if (flag == 4) {

option.UseKunlunXin();

- } else if (flag == 5) {

- option.UseAscend();

}

std::string det_model_dir = argv[1];

diff --git a/examples/vision/ocr/PP-OCRv3/cpp/infer_static_shape.cc b/examples/vision/ocr/PP-OCRv3/cpp/infer_static_shape.cc

new file mode 100755

index 000000000..aea3f5699

--- /dev/null

+++ b/examples/vision/ocr/PP-OCRv3/cpp/infer_static_shape.cc

@@ -0,0 +1,107 @@

+// Copyright (c) 2022 PaddlePaddle Authors. All Rights Reserved.

+//

+// Licensed under the Apache License, Version 2.0 (the "License");

+// you may not use this file except in compliance with the License.

+// You may obtain a copy of the License at

+//

+// http://www.apache.org/licenses/LICENSE-2.0

+//

+// Unless required by applicable law or agreed to in writing, software

+// distributed under the License is distributed on an "AS IS" BASIS,

+// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+// See the License for the specific language governing permissions and

+// limitations under the License.

+

+#include "fastdeploy/vision.h"

+#ifdef WIN32

+const char sep = '\\';

+#else

+const char sep = '/';

+#endif

+

+void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model_dir, const std::string& rec_model_dir, const std::string& rec_label_file, const std::string& image_file, const fastdeploy::RuntimeOption& option) {

+ auto det_model_file = det_model_dir + sep + "inference.pdmodel";

+ auto det_params_file = det_model_dir + sep + "inference.pdiparams";

+

+ auto cls_model_file = cls_model_dir + sep + "inference.pdmodel";

+ auto cls_params_file = cls_model_dir + sep + "inference.pdiparams";

+

+ auto rec_model_file = rec_model_dir + sep + "inference.pdmodel";

+ auto rec_params_file = rec_model_dir + sep + "inference.pdiparams";

+

+ auto det_option = option;

+ auto cls_option = option;

+ auto rec_option = option;

+

+ auto det_model = fastdeploy::vision::ocr::DBDetector(det_model_file, det_params_file, det_option);

+ auto cls_model = fastdeploy::vision::ocr::Classifier(cls_model_file, cls_params_file, cls_option);

+ auto rec_model = fastdeploy::vision::ocr::Recognizer(rec_model_file, rec_params_file, rec_label_file, rec_option);

+

+ // Users could enable static shape infer for rec model when deploy PP-OCR on hardware

+ // which can not support dynamic shape infer well, like Huawei Ascend series.

+ rec_model.GetPreprocessor().SetStaticShapeInfer(true);

+

+ assert(det_model.Initialized());

+ assert(cls_model.Initialized());

+ assert(rec_model.Initialized());

+

+ // The classification model is optional, so the PP-OCR can also be connected in series as follows

+ // auto ppocr_v3 = fastdeploy::pipeline::PPOCRv3(&det_model, &rec_model);

+ auto ppocr_v3 = fastdeploy::pipeline::PPOCRv3(&det_model, &cls_model, &rec_model);

+

+ // When users enable static shape infer for rec model, the batch size of cls and rec model must to be set to 1.

+ ppocr_v3.SetClsBatchSize(1);

+ ppocr_v3.SetRecBatchSize(1);

+

+ if(!ppocr_v3.Initialized()){

+ std::cerr << "Failed to initialize PP-OCR." << std::endl;

+ return;

+ }

+

+ auto im = cv::imread(image_file);

+

+ fastdeploy::vision::OCRResult result;

+ if (!ppocr_v3.Predict(im, &result)) {

+ std::cerr << "Failed to predict." << std::endl;

+ return;

+ }

+

+ std::cout << result.Str() << std::endl;

+

+ auto vis_im = fastdeploy::vision::VisOcr(im, result);

+ cv::imwrite("vis_result.jpg", vis_im);

+ std::cout << "Visualized result saved in ./vis_result.jpg" << std::endl;

+}

+

+int main(int argc, char* argv[]) {

+ if (argc < 7) {

+ std::cout << "Usage: infer_demo path/to/det_model path/to/cls_model "

+ "path/to/rec_model path/to/rec_label_file path/to/image "

+ "run_option, "

+ "e.g ./infer_demo ./ch_PP-OCRv3_det_infer "

+ "./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer "

+ "./ppocr_keys_v1.txt ./12.jpg 0"

+ << std::endl;

+ std::cout << "The data type of run_option is int, 0: run with cpu; 1: run "

+ "with ascend."

+ << std::endl;

+ return -1;

+ }

+

+ fastdeploy::RuntimeOption option;

+ int flag = std::atoi(argv[6]);

+

+ if (flag == 0) {

+ option.UseCpu();

+ } else if (flag == 1) {

+ option.UseAscend();

+ }

+

+ std::string det_model_dir = argv[1];

+ std::string cls_model_dir = argv[2];

+ std::string rec_model_dir = argv[3];

+ std::string rec_label_file = argv[4];

+ std::string test_image = argv[5];

+ InitAndInfer(det_model_dir, cls_model_dir, rec_model_dir, rec_label_file, test_image, option);

+ return 0;

+}

diff --git a/examples/vision/ocr/PP-OCRv3/python/README.md b/examples/vision/ocr/PP-OCRv3/python/README.md

index dd5965d33..3fcf372e0 100755

--- a/examples/vision/ocr/PP-OCRv3/python/README.md

+++ b/examples/vision/ocr/PP-OCRv3/python/README.md

@@ -35,8 +35,8 @@ python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2

python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

# 昆仑芯XPU推理

python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device kunlunxin

-# 华为昇腾推理

-python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device ascend

+# 华为昇腾推理,需要使用静态shape脚本, 若用户需要连续地预测图片, 输入图片尺寸需要准备为统一尺寸

+python infer_static_shape.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device ascend

```

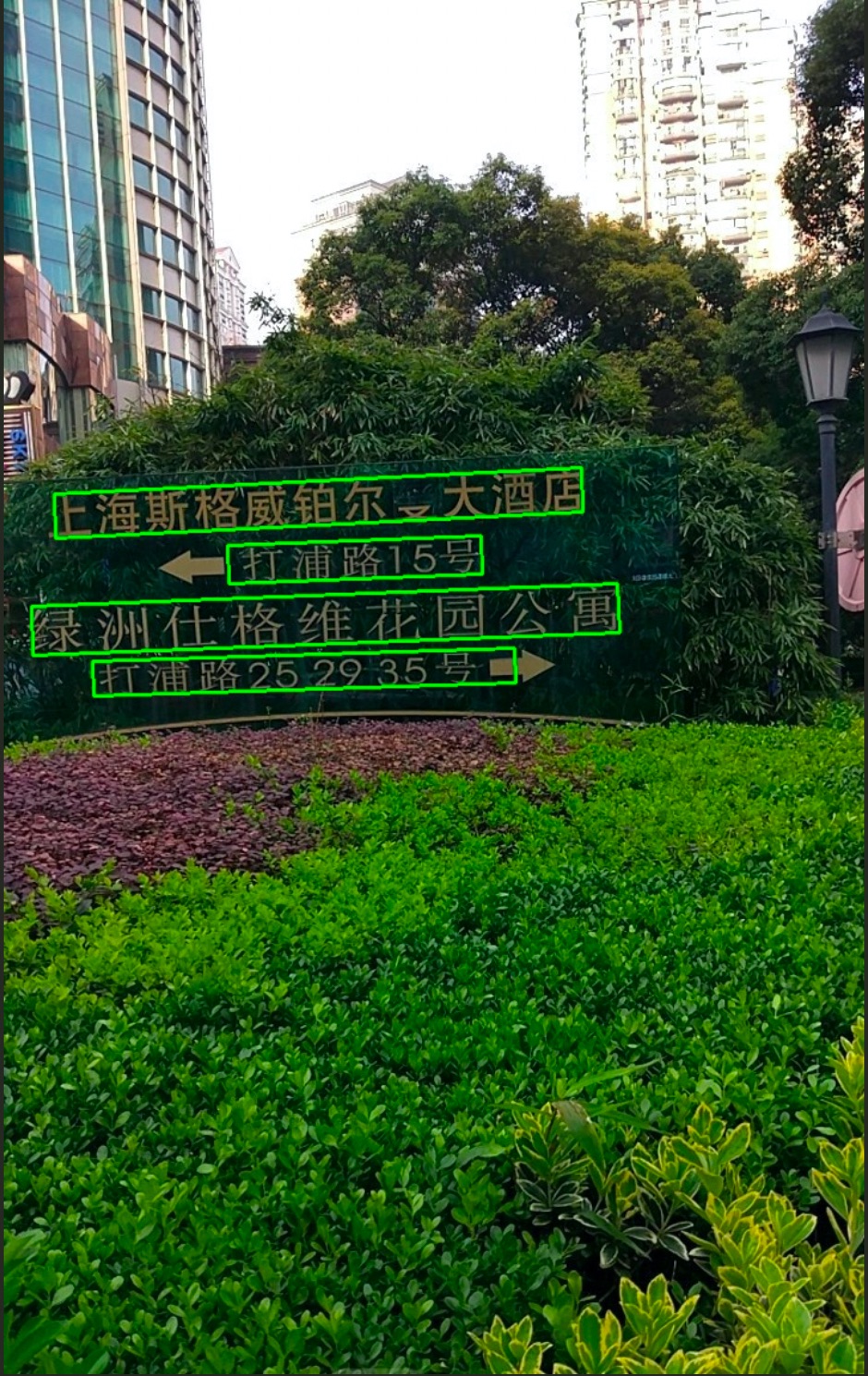

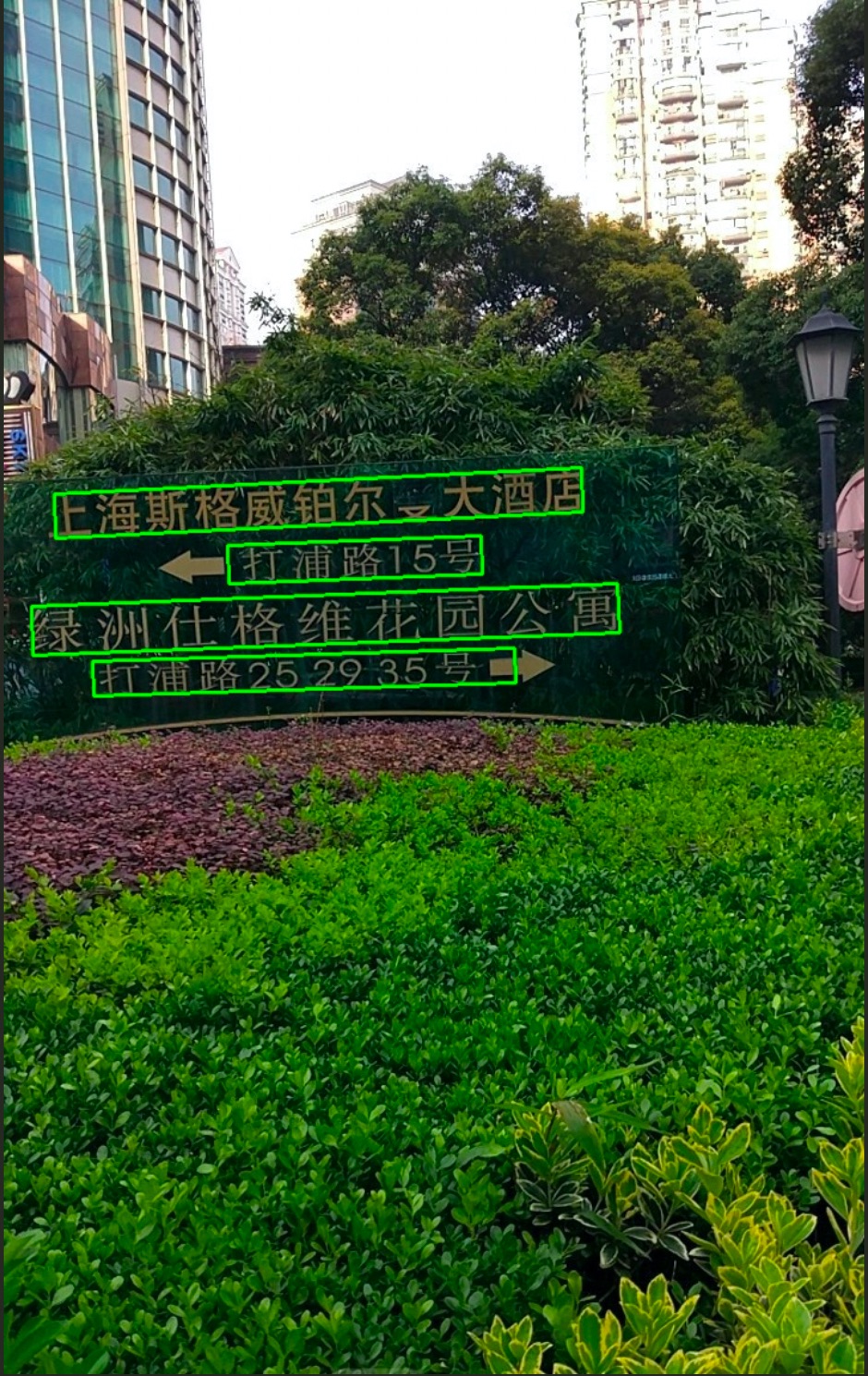

运行完成可视化结果如下图所示

diff --git a/examples/vision/ocr/PP-OCRv3/python/infer.py b/examples/vision/ocr/PP-OCRv3/python/infer.py

index f6da98bdb..6dabce80e 100755

--- a/examples/vision/ocr/PP-OCRv3/python/infer.py

+++ b/examples/vision/ocr/PP-OCRv3/python/infer.py

@@ -58,43 +58,113 @@ def parse_arguments():

type=int,

default=9,

help="Number of threads while inference on CPU.")

+ parser.add_argument(

+ "--cls_bs",

+ type=int,

+ default=1,

+ help="Classification model inference batch size.")

+ parser.add_argument(

+ "--rec_bs",

+ type=int,

+ default=6,

+ help="Recognition model inference batch size")

return parser.parse_args()

def build_option(args):

- option = fd.RuntimeOption()

- if args.device.lower() == "gpu":

- option.use_gpu(0)

- option.set_cpu_thread_num(args.cpu_thread_num)

+ det_option = fd.RuntimeOption()

+ cls_option = fd.RuntimeOption()

+ rec_option = fd.RuntimeOption()

+

+ det_option.set_cpu_thread_num(args.cpu_thread_num)

+ cls_option.set_cpu_thread_num(args.cpu_thread_num)

+ rec_option.set_cpu_thread_num(args.cpu_thread_num)

+

+ if args.device.lower() == "gpu":

+ det_option.use_gpu(args.device_id)

+ cls_option.use_gpu(args.device_id)

+ rec_option.use_gpu(args.device_id)

if args.device.lower() == "kunlunxin":

- option.use_kunlunxin()

- return option

+ det_option.use_kunlunxin()

+ cls_option.use_kunlunxin()

+ rec_option.use_kunlunxin()

- if args.device.lower() == "ascend":

- option.use_ascend()

- return option

+ return det_option, cls_option, rec_option

if args.backend.lower() == "trt":

assert args.device.lower(

) == "gpu", "TensorRT backend require inference on device GPU."

- option.use_trt_backend()

+ det_option.use_trt_backend()

+ cls_option.use_trt_backend()

+ rec_option.use_trt_backend()

+

+ # 设置trt input shape

+ # 如果用户想要自己改动检测模型的输入shape, 我们建议用户把检测模型的长和高设置为32的倍数.

+ det_option.set_trt_input_shape("x", [1, 3, 64, 64], [1, 3, 640, 640],

+ [1, 3, 960, 960])

+ cls_option.set_trt_input_shape("x", [1, 3, 48, 10],

+ [args.cls_bs, 3, 48, 320],

+ [args.cls_bs, 3, 48, 1024])

+ rec_option.set_trt_input_shape("x", [1, 3, 48, 10],

+ [args.rec_bs, 3, 48, 320],

+ [args.rec_bs, 3, 48, 2304])

+

+ # 用户可以把TRT引擎文件保存至本地

+ det_option.set_trt_cache_file(args.det_model + "/det_trt_cache.trt")

+ cls_option.set_trt_cache_file(args.cls_model + "/cls_trt_cache.trt")

+ rec_option.set_trt_cache_file(args.rec_model + "/rec_trt_cache.trt")

+

elif args.backend.lower() == "pptrt":

assert args.device.lower(

) == "gpu", "Paddle-TensorRT backend require inference on device GPU."

- option.use_trt_backend()

- option.enable_paddle_trt_collect_shape()

- option.enable_paddle_to_trt()

+ det_option.use_trt_backend()

+ det_option.enable_paddle_trt_collect_shape()

+ det_option.enable_paddle_to_trt()

+

+ cls_option.use_trt_backend()

+ cls_option.enable_paddle_trt_collect_shape()

+ cls_option.enable_paddle_to_trt()

+

+ rec_option.use_trt_backend()

+ rec_option.enable_paddle_trt_collect_shape()

+ rec_option.enable_paddle_to_trt()

+

+ # 设置trt input shape

+ # 如果用户想要自己改动检测模型的输入shape, 我们建议用户把检测模型的长和高设置为32的倍数.

+ det_option.set_trt_input_shape("x", [1, 3, 64, 64], [1, 3, 640, 640],

+ [1, 3, 960, 960])

+ cls_option.set_trt_input_shape("x", [1, 3, 48, 10],

+ [args.cls_bs, 3, 48, 320],

+ [args.cls_bs, 3, 48, 1024])

+ rec_option.set_trt_input_shape("x", [1, 3, 48, 10],

+ [args.rec_bs, 3, 48, 320],

+ [args.rec_bs, 3, 48, 2304])

+

+ # 用户可以把TRT引擎文件保存至本地

+ det_option.set_trt_cache_file(args.det_model)

+ cls_option.set_trt_cache_file(args.cls_model)

+ rec_option.set_trt_cache_file(args.rec_model)

+

elif args.backend.lower() == "ort":

- option.use_ort_backend()

+ det_option.use_ort_backend()

+ cls_option.use_ort_backend()

+ rec_option.use_ort_backend()

+

elif args.backend.lower() == "paddle":

- option.use_paddle_infer_backend()

+ det_option.use_paddle_infer_backend()

+ cls_option.use_paddle_infer_backend()

+ rec_option.use_paddle_infer_backend()

+

elif args.backend.lower() == "openvino":

assert args.device.lower(

) == "cpu", "OpenVINO backend require inference on device CPU."

- option.use_openvino_backend()

- return option

+ det_option.use_openvino_backend()

+ cls_option.use_openvino_backend()

+ rec_option.use_openvino_backend()

+

+ return det_option, cls_option, rec_option

args = parse_arguments()

@@ -111,49 +181,18 @@ rec_params_file = os.path.join(args.rec_model, "inference.pdiparams")

rec_label_file = args.rec_label_file

# 对于三个模型,均采用同样的部署配置

-# 用户也可根据自行需求分别配置

-runtime_option = build_option(args)

+# 用户也可根据自己的需求,个性化配置

+det_option, cls_option, rec_option = build_option(args)

-# PPOCR的cls和rec模型现在已经支持推理一个Batch的数据

-# 定义下面两个变量后, 可用于设置trt输入shape, 并在PPOCR模型初始化后, 完成Batch推理设置

-# 当用户要把PP-OCR部署在对动态shape推理支持有限的设备上时,(例如华为昇腾)

-# 需要把cls_batch_size和rec_batch_size都设置为1.

-cls_batch_size = 1

-rec_batch_size = 6

-

-# 当使用TRT时,分别给三个模型的runtime设置动态shape,并完成模型的创建.

-# 注意: 需要在检测模型创建完成后,再设置分类模型的动态输入并创建分类模型, 识别模型同理.

-# 如果用户想要自己改动检测模型的输入shape, 我们建议用户把检测模型的长和高设置为32的倍数.

-det_option = runtime_option

-det_option.set_trt_input_shape("x", [1, 3, 64, 64], [1, 3, 640, 640],

- [1, 3, 960, 960])

-# 用户可以把TRT引擎文件保存至本地

-# det_option.set_trt_cache_file(args.det_model + "/det_trt_cache.trt")

det_model = fd.vision.ocr.DBDetector(

det_model_file, det_params_file, runtime_option=det_option)

-cls_option = runtime_option

-cls_option.set_trt_input_shape("x", [1, 3, 48, 10],

- [cls_batch_size, 3, 48, 320],

- [cls_batch_size, 3, 48, 1024])

-# 用户可以把TRT引擎文件保存至本地

-# cls_option.set_trt_cache_file(args.cls_model + "/cls_trt_cache.trt")

cls_model = fd.vision.ocr.Classifier(

cls_model_file, cls_params_file, runtime_option=cls_option)

-rec_option = runtime_option

-rec_option.set_trt_input_shape("x", [1, 3, 48, 10],

- [rec_batch_size, 3, 48, 320],

- [rec_batch_size, 3, 48, 2304])

-# 用户可以把TRT引擎文件保存至本地

-# rec_option.set_trt_cache_file(args.rec_model + "/rec_trt_cache.trt")

rec_model = fd.vision.ocr.Recognizer(

rec_model_file, rec_params_file, rec_label_file, runtime_option=rec_option)

-# 当用户要把PP-OCR部署在对动态shape推理支持有限的设备上时,(例如华为昇腾)

-# 需要使用下行代码, 来启用rec模型的静态shape推理.

-# rec_model.preprocessor.static_shape_infer = True

-

# 创建PP-OCR,串联3个模型,其中cls_model可选,如无需求,可设置为None

ppocr_v3 = fd.vision.ocr.PPOCRv3(

det_model=det_model, cls_model=cls_model, rec_model=rec_model)

@@ -161,8 +200,8 @@ ppocr_v3 = fd.vision.ocr.PPOCRv3(

# 给cls和rec模型设置推理时的batch size

# 此值能为-1, 和1到正无穷

# 当此值为-1时, cls和rec模型的batch size将默认和det模型检测出的框的数量相同

-ppocr_v3.cls_batch_size = cls_batch_size

-ppocr_v3.rec_batch_size = rec_batch_size

+ppocr_v3.cls_batch_size = args.cls_bs

+ppocr_v3.rec_batch_size = args.rec_bs

# 预测图片准备

im = cv2.imread(args.image)

diff --git a/examples/vision/ocr/PP-OCRv3/python/infer_static_shape.py b/examples/vision/ocr/PP-OCRv3/python/infer_static_shape.py

new file mode 100755

index 000000000..e707d378c

--- /dev/null

+++ b/examples/vision/ocr/PP-OCRv3/python/infer_static_shape.py

@@ -0,0 +1,114 @@

+# Copyright (c) 2022 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+import fastdeploy as fd

+import cv2

+import os

+

+

+def parse_arguments():

+ import argparse

+ import ast

+ parser = argparse.ArgumentParser()

+ parser.add_argument(

+ "--det_model", required=True, help="Path of Detection model of PPOCR.")

+ parser.add_argument(

+ "--cls_model",

+ required=True,

+ help="Path of Classification model of PPOCR.")

+ parser.add_argument(

+ "--rec_model",

+ required=True,

+ help="Path of Recognization model of PPOCR.")

+ parser.add_argument(

+ "--rec_label_file",

+ required=True,

+ help="Path of Recognization model of PPOCR.")

+ parser.add_argument(

+ "--image", type=str, required=True, help="Path of test image file.")

+ parser.add_argument(

+ "--device",

+ type=str,

+ default='cpu',

+ help="Type of inference device, support 'cpu', 'kunlunxin' or 'gpu'.")

+ parser.add_argument(

+ "--cpu_thread_num",

+ type=int,

+ default=9,

+ help="Number of threads while inference on CPU.")

+ return parser.parse_args()

+

+

+def build_option(args):

+

+ det_option = fd.RuntimeOption()

+ cls_option = fd.RuntimeOption()

+ rec_option = fd.RuntimeOption()

+

+ # 当前需要对PP-OCR启用静态shape推理的硬件只有昇腾.

+ if args.device.lower() == "ascend":

+ det_option.use_ascend()

+ cls_option.use_ascend()

+ rec_option.use_ascend()

+

+ return det_option, cls_option, rec_option

+

+

+args = parse_arguments()

+

+# Detection模型, 检测文字框

+det_model_file = os.path.join(args.det_model, "inference.pdmodel")

+det_params_file = os.path.join(args.det_model, "inference.pdiparams")

+# Classification模型,方向分类,可选

+cls_model_file = os.path.join(args.cls_model, "inference.pdmodel")

+cls_params_file = os.path.join(args.cls_model, "inference.pdiparams")

+# Recognition模型,文字识别模型

+rec_model_file = os.path.join(args.rec_model, "inference.pdmodel")

+rec_params_file = os.path.join(args.rec_model, "inference.pdiparams")

+rec_label_file = args.rec_label_file

+

+det_option, cls_option, rec_option = build_option(args)

+

+det_model = fd.vision.ocr.DBDetector(

+ det_model_file, det_params_file, runtime_option=det_option)

+

+cls_model = fd.vision.ocr.Classifier(

+ cls_model_file, cls_params_file, runtime_option=cls_option)

+

+rec_model = fd.vision.ocr.Recognizer(

+ rec_model_file, rec_params_file, rec_label_file, runtime_option=rec_option)

+

+# Rec模型启用静态shape推理

+rec_model.preprocessor.static_shape_infer = True

+

+# 创建PP-OCR,串联3个模型,其中cls_model可选,如无需求,可设置为None

+ppocr_v3 = fd.vision.ocr.PPOCRv3(

+ det_model=det_model, cls_model=cls_model, rec_model=rec_model)

+

+# Cls模型和Rec模型的batch size 必须设置为1, 开启静态shape推理

+ppocr_v3.cls_batch_size = 1

+ppocr_v3.rec_batch_size = 1

+

+# 预测图片准备

+im = cv2.imread(args.image)

+

+#预测并打印结果

+result = ppocr_v3.predict(im)

+

+print(result)

+

+# 可视化结果

+vis_im = fd.vision.vis_ppocr(im, result)

+cv2.imwrite("visualized_result.jpg", vis_im)

+print("Visualized result save in ./visualized_result.jpg")

diff --git a/examples/vision/segmentation/paddleseg/cpp/README.md b/examples/vision/segmentation/paddleseg/cpp/README.md

index 6b1be6e5b..07f9f4c62 100755

--- a/examples/vision/segmentation/paddleseg/cpp/README.md

+++ b/examples/vision/segmentation/paddleseg/cpp/README.md

@@ -46,6 +46,9 @@ wget https://paddleseg.bj.bcebos.com/dygraph/demo/cityscapes_demo.png

以上命令只适用于Linux或MacOS, Windows下SDK的使用方式请参考:

- [如何在Windows中使用FastDeploy C++ SDK](../../../../../docs/cn/faq/use_sdk_on_windows.md)

+如果用户使用华为昇腾NPU部署, 请参考以下方式在部署前初始化部署环境:

+- [如何使用华为昇腾NPU部署](../../../../../docs/cn/faq/use_sdk_on_ascend.md)

+

## PaddleSeg C++接口

### PaddleSeg类

diff --git a/examples/vision/ocr/PP-OCRv3/cpp/infer.cc b/examples/vision/ocr/PP-OCRv3/cpp/infer.cc

index 7fbcf835e..3b35c1d44 100755

--- a/examples/vision/ocr/PP-OCRv3/cpp/infer.cc

+++ b/examples/vision/ocr/PP-OCRv3/cpp/infer.cc

@@ -56,10 +56,6 @@ void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model

auto cls_model = fastdeploy::vision::ocr::Classifier(cls_model_file, cls_params_file, cls_option);

auto rec_model = fastdeploy::vision::ocr::Recognizer(rec_model_file, rec_params_file, rec_label_file, rec_option);

- // Users could enable static shape infer for rec model when deploy PP-OCR on hardware

- // which can not support dynamic shape infer well, like Huawei Ascend series.

- // rec_model.GetPreprocessor().SetStaticShapeInfer(true);

-

assert(det_model.Initialized());

assert(cls_model.Initialized());

assert(rec_model.Initialized());

@@ -71,9 +67,6 @@ void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model

// Set inference batch size for cls model and rec model, the value could be -1 and 1 to positive infinity.

// When inference batch size is set to -1, it means that the inference batch size

// of the cls and rec models will be the same as the number of boxes detected by the det model.

- // When users enable static shape infer for rec model, the batch size of cls and rec model needs to be set to 1.

- // ppocr_v3.SetClsBatchSize(1);

- // ppocr_v3.SetRecBatchSize(1);

ppocr_v3.SetClsBatchSize(cls_batch_size);

ppocr_v3.SetRecBatchSize(rec_batch_size);

@@ -130,8 +123,6 @@ int main(int argc, char* argv[]) {

option.EnablePaddleToTrt();

} else if (flag == 4) {

option.UseKunlunXin();

- } else if (flag == 5) {

- option.UseAscend();

}

std::string det_model_dir = argv[1];

diff --git a/examples/vision/ocr/PP-OCRv3/cpp/infer_static_shape.cc b/examples/vision/ocr/PP-OCRv3/cpp/infer_static_shape.cc

new file mode 100755

index 000000000..aea3f5699

--- /dev/null

+++ b/examples/vision/ocr/PP-OCRv3/cpp/infer_static_shape.cc

@@ -0,0 +1,107 @@

+// Copyright (c) 2022 PaddlePaddle Authors. All Rights Reserved.

+//

+// Licensed under the Apache License, Version 2.0 (the "License");

+// you may not use this file except in compliance with the License.

+// You may obtain a copy of the License at

+//

+// http://www.apache.org/licenses/LICENSE-2.0

+//

+// Unless required by applicable law or agreed to in writing, software

+// distributed under the License is distributed on an "AS IS" BASIS,

+// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+// See the License for the specific language governing permissions and

+// limitations under the License.

+

+#include "fastdeploy/vision.h"

+#ifdef WIN32

+const char sep = '\\';

+#else

+const char sep = '/';

+#endif

+

+void InitAndInfer(const std::string& det_model_dir, const std::string& cls_model_dir, const std::string& rec_model_dir, const std::string& rec_label_file, const std::string& image_file, const fastdeploy::RuntimeOption& option) {

+ auto det_model_file = det_model_dir + sep + "inference.pdmodel";

+ auto det_params_file = det_model_dir + sep + "inference.pdiparams";

+

+ auto cls_model_file = cls_model_dir + sep + "inference.pdmodel";

+ auto cls_params_file = cls_model_dir + sep + "inference.pdiparams";

+

+ auto rec_model_file = rec_model_dir + sep + "inference.pdmodel";

+ auto rec_params_file = rec_model_dir + sep + "inference.pdiparams";

+

+ auto det_option = option;

+ auto cls_option = option;

+ auto rec_option = option;

+

+ auto det_model = fastdeploy::vision::ocr::DBDetector(det_model_file, det_params_file, det_option);

+ auto cls_model = fastdeploy::vision::ocr::Classifier(cls_model_file, cls_params_file, cls_option);

+ auto rec_model = fastdeploy::vision::ocr::Recognizer(rec_model_file, rec_params_file, rec_label_file, rec_option);

+

+ // Users could enable static shape infer for rec model when deploy PP-OCR on hardware

+ // which can not support dynamic shape infer well, like Huawei Ascend series.

+ rec_model.GetPreprocessor().SetStaticShapeInfer(true);

+

+ assert(det_model.Initialized());

+ assert(cls_model.Initialized());

+ assert(rec_model.Initialized());

+

+ // The classification model is optional, so the PP-OCR can also be connected in series as follows

+ // auto ppocr_v3 = fastdeploy::pipeline::PPOCRv3(&det_model, &rec_model);

+ auto ppocr_v3 = fastdeploy::pipeline::PPOCRv3(&det_model, &cls_model, &rec_model);

+

+ // When users enable static shape infer for rec model, the batch size of cls and rec model must to be set to 1.

+ ppocr_v3.SetClsBatchSize(1);

+ ppocr_v3.SetRecBatchSize(1);

+

+ if(!ppocr_v3.Initialized()){

+ std::cerr << "Failed to initialize PP-OCR." << std::endl;

+ return;

+ }

+

+ auto im = cv::imread(image_file);

+

+ fastdeploy::vision::OCRResult result;

+ if (!ppocr_v3.Predict(im, &result)) {

+ std::cerr << "Failed to predict." << std::endl;

+ return;

+ }

+

+ std::cout << result.Str() << std::endl;

+

+ auto vis_im = fastdeploy::vision::VisOcr(im, result);

+ cv::imwrite("vis_result.jpg", vis_im);

+ std::cout << "Visualized result saved in ./vis_result.jpg" << std::endl;

+}

+

+int main(int argc, char* argv[]) {

+ if (argc < 7) {

+ std::cout << "Usage: infer_demo path/to/det_model path/to/cls_model "

+ "path/to/rec_model path/to/rec_label_file path/to/image "

+ "run_option, "

+ "e.g ./infer_demo ./ch_PP-OCRv3_det_infer "

+ "./ch_ppocr_mobile_v2.0_cls_infer ./ch_PP-OCRv3_rec_infer "

+ "./ppocr_keys_v1.txt ./12.jpg 0"

+ << std::endl;

+ std::cout << "The data type of run_option is int, 0: run with cpu; 1: run "

+ "with ascend."

+ << std::endl;

+ return -1;

+ }

+

+ fastdeploy::RuntimeOption option;

+ int flag = std::atoi(argv[6]);

+

+ if (flag == 0) {

+ option.UseCpu();

+ } else if (flag == 1) {

+ option.UseAscend();

+ }

+

+ std::string det_model_dir = argv[1];

+ std::string cls_model_dir = argv[2];

+ std::string rec_model_dir = argv[3];

+ std::string rec_label_file = argv[4];

+ std::string test_image = argv[5];

+ InitAndInfer(det_model_dir, cls_model_dir, rec_model_dir, rec_label_file, test_image, option);

+ return 0;

+}

diff --git a/examples/vision/ocr/PP-OCRv3/python/README.md b/examples/vision/ocr/PP-OCRv3/python/README.md

index dd5965d33..3fcf372e0 100755

--- a/examples/vision/ocr/PP-OCRv3/python/README.md

+++ b/examples/vision/ocr/PP-OCRv3/python/README.md

@@ -35,8 +35,8 @@ python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2

python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

# 昆仑芯XPU推理

python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device kunlunxin

-# 华为昇腾推理

-python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device ascend

+# 华为昇腾推理,需要使用静态shape脚本, 若用户需要连续地预测图片, 输入图片尺寸需要准备为统一尺寸

+python infer_static_shape.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device ascend

```

运行完成可视化结果如下图所示

diff --git a/examples/vision/ocr/PP-OCRv3/python/infer.py b/examples/vision/ocr/PP-OCRv3/python/infer.py

index f6da98bdb..6dabce80e 100755

--- a/examples/vision/ocr/PP-OCRv3/python/infer.py

+++ b/examples/vision/ocr/PP-OCRv3/python/infer.py

@@ -58,43 +58,113 @@ def parse_arguments():

type=int,

default=9,

help="Number of threads while inference on CPU.")

+ parser.add_argument(

+ "--cls_bs",

+ type=int,

+ default=1,

+ help="Classification model inference batch size.")

+ parser.add_argument(

+ "--rec_bs",

+ type=int,

+ default=6,

+ help="Recognition model inference batch size")

return parser.parse_args()

def build_option(args):

- option = fd.RuntimeOption()

- if args.device.lower() == "gpu":

- option.use_gpu(0)